Build a Client Escalation Ladder Before AI Handles Service Replies

Build a Client Escalation Ladder Before AI Handles Service Replies

AI can answer a routine client question quickly. That does not mean it knows when the question has stopped being routine.

That is the operating risk for real estate teams, service businesses, and CRM-heavy organizations in 2026. AI is being pushed into inboxes, chat widgets, phone summaries, text follow-up, help desks, review replies, and transaction updates at the same time customers are expecting faster, more contextual help. The weak point is not usually the first draft. It is the missing escalation rule that tells the system when speed should give way to human judgment.

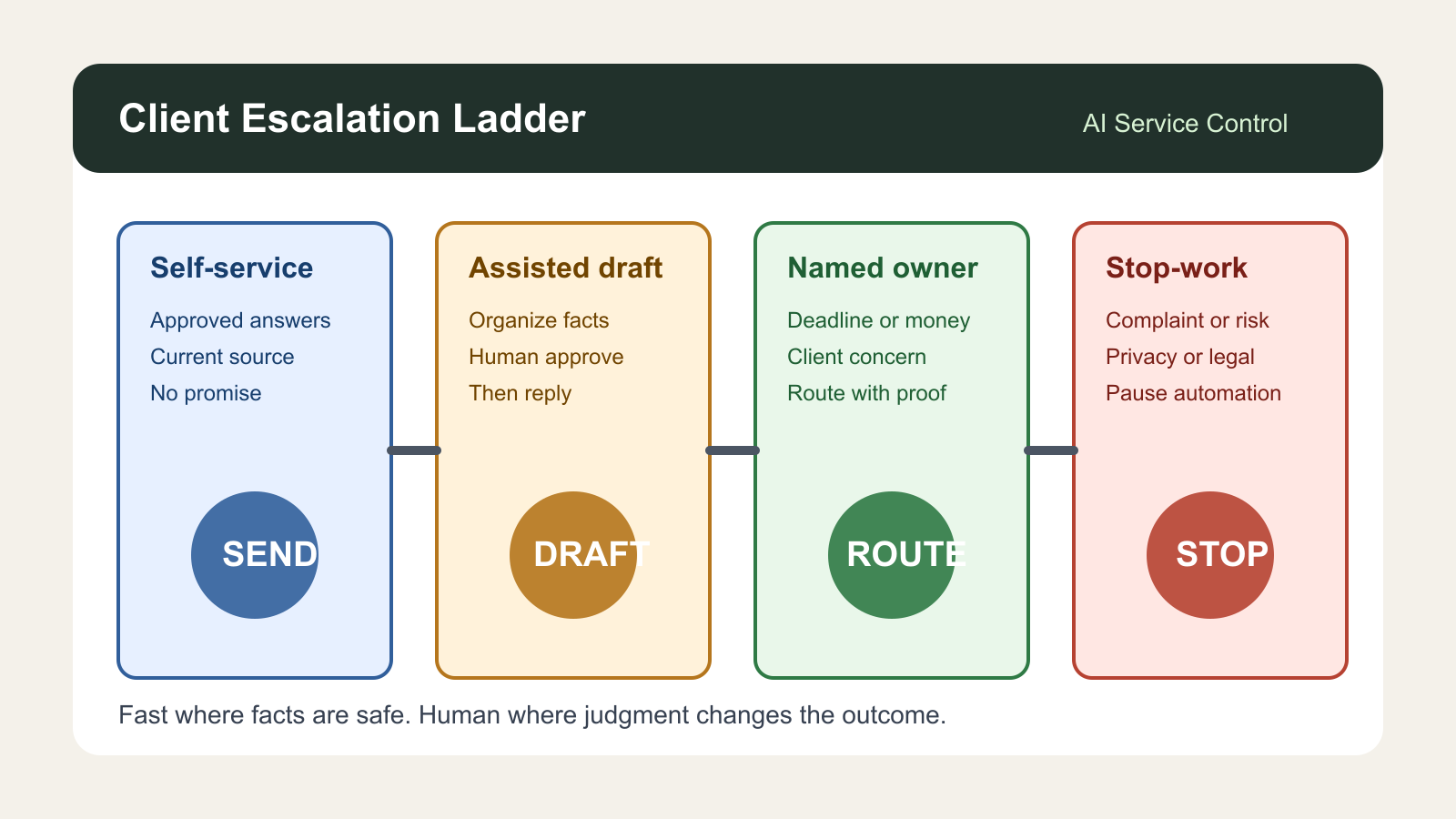

A client escalation ladder is the control surface that solves that problem. It defines which service requests AI can resolve, which ones it can draft but not send, which ones need a named owner, and which ones should immediately stop automation until a person reviews the situation. The ladder turns escalation from a vague instruction into a visible operating rule.

The timing matters. Gartner reported on April 28, 2026 that 85% of customer service and support leaders are expanding human agent responsibilities as AI reduces simple contact volume. Gartner also found that customers still place more trust in human agents than AI for product or service recommendations, especially where judgment matters. Pega and YouGov reported in February 2026 that 64% of consumers were not confident in how businesses use generative AI in customer interactions, while 77% said they often or always get better outcomes with human-only support. That is not an argument against AI. It is an argument against ungoverned AI service.

Real estate makes the issue sharper because many service replies carry high financial, emotional, or legal context. A buyer asking whether the earnest money deadline moved is not just asking for an update. A seller asking why showings slowed may be asking for pricing advice. A past client asking about a contractor, insurance claim, tax notice, or refinance email may be exposing a referral opportunity, a compliance risk, or a trust moment. The AI assistant can help with all of those, but only if it knows where the handoff line sits.

What the ladder should separate

Start with four lanes, not a complex routing model.

Lane one is safe self-service. These are answers that can be handled from approved knowledge, recent CRM state, and low-risk context. Examples include office hours, document upload instructions, appointment confirmations, general process reminders, and links to already-approved resources. AI can answer these directly when the source is current and the message does not create a promise.

Lane two is assisted draft. These are messages AI may prepare, but a person should approve before sending. Examples include buyer education, listing feedback summaries, vendor scheduling updates, showing recap notes, and explanations of next steps where the facts are still being assembled. The AI is useful here because it organizes context and saves time. It should not become the sender of record until a human confirms the facts.

Lane three is named-owner escalation. These are requests that need a specific person, not a generic team inbox. Examples include pricing objections, financing confusion, inspection pressure, appraisal disappointment, complaint language, missed expectations, closing delays, cancellation risk, commission questions, referral partner friction, or anything that references money, deadline, legal terms, discrimination, safety, or client dissatisfaction. AI can summarize and route. It should not resolve.

Lane four is stop-work. These are cases where automation should pause across channels until the owner reviews the account. Examples include an angry client, a potential fair housing issue, a data privacy concern, a disputed review, a threat to terminate representation, a request for legal or tax advice, a wire-fraud signal, or a client asking the business to stop contacting them. The system should create an internal incident, preserve the context, and prevent follow-up sequences from continuing as if nothing happened.

The ladder is valuable because it creates different permissions for reading, drafting, sending, routing, and pausing. Many teams only ask whether AI can answer. The better question is which action the AI is allowed to take at this risk level.

Give AI escalation evidence, not vibes

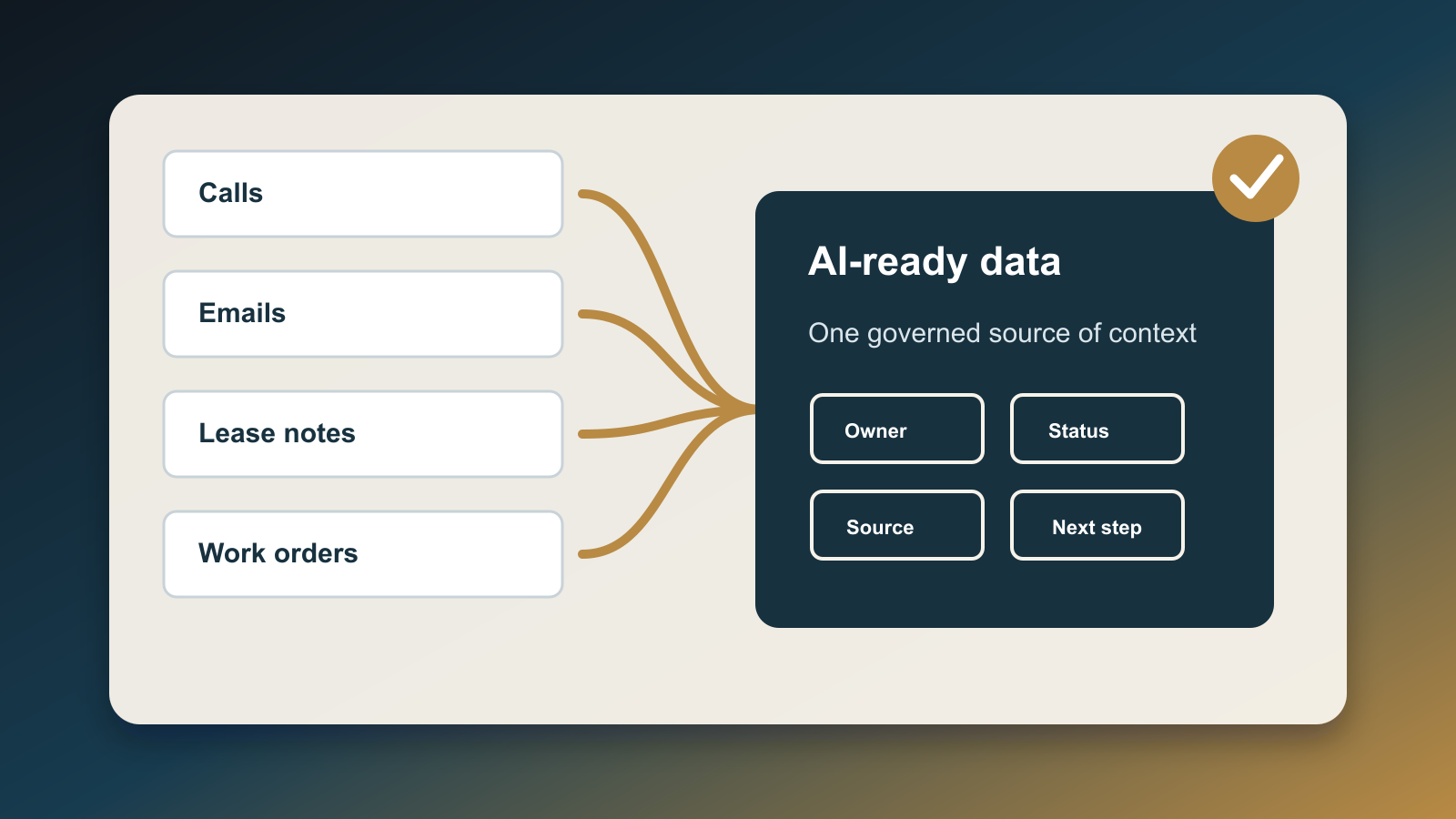

A useful ladder needs evidence fields in the CRM or service desk. It should not depend on the AI guessing sentiment from a single message.

Create fields for issue type, client stage, financial exposure, deadline proximity, sentiment, prior unresolved touches, owner, escalation reason, promised next step, next-review time, and allowed AI action. Add a source timestamp for each important fact. If the AI cannot see whether the deadline, amount, owner, or client stage is current, the allowed action should drop from send to draft or from draft to route.

Zendesk's 2026 CX Trends release points in the same direction: customers want continuity, quick resolution, and transparent AI decisions. Zendesk reported that 81% of consumers want agents to continue the conversation without forcing them to repeat context, and 95% expect explanations for AI-made decisions. That expectation applies directly to escalation. A client should not have to restate the same issue because the CRM lost the thread, and the business should be able to explain why AI routed the case to a person.

The ladder should also include freshness thresholds. If a transaction note is older than 24 hours, a showing objection is older than the latest feedback batch, a lender update is older than the last condition request, or a complaint has not been acknowledged by the owner, AI should not write as though the file is current. Fast replies built from stale context are worse than slower replies with verified context.

Design the human role before volume drops

The biggest mistake is treating escalation as an exception after AI is already live. The human role should be redesigned first.

If AI handles more basic messages, the remaining human workload gets denser. The person is not just answering fewer tickets. They are handling higher-stakes exceptions, relationship repair, negotiation, judgment calls, compliance concerns, and ambiguous client emotion. Gartner's April 2026 service research describes this as workforce redesign rather than simple replacement. The lesson for smaller operators is practical: define the review owner, service standard, decision rights, and escalation notes before automation starts reducing the easy volume.

For a real estate team, the escalation owner may be the lead agent, transaction coordinator, listing manager, buyer specialist, broker, marketing lead, or operations manager depending on the lane. The ladder should make that ownership visible. A message about a showing appointment may go to an assistant. A message about price strategy goes to the listing lead. A message about financing doubt goes to the buyer agent and transaction coordinator. A complaint about representation goes to the broker or team lead.

Ownership beats generic urgency. Without ownership, AI can create a polished summary and still leave the client waiting.

Connect escalation to trust and referral value

AI service replies are not only a cost-control system. They are part of the trust system.

NAR's 2026 generational trends coverage reported that 88% of buyers purchased through a real estate agent and 91% of sellers worked with an agent. NAR's February 2026 RPR survey also showed that real estate professionals are using AI, but accuracy, compliance, and client-facing use remain top concerns. That combination is the business case for a ladder: clients still rely on human expertise, while teams are already using AI inside the work.

A client escalation ladder protects both sides. It lets AI keep routine service responsive while reserving human attention for the moments that create risk, loyalty, and referrals. It also gives team leaders a measurable way to improve operations. Every escalation should produce a reason code. Every reason code should become either a better knowledge-base answer, a clearer process, a new CRM field, or a tighter stop rule.

This is how service automation gets better without becoming reckless. The goal is not to keep humans in every reply. The goal is to keep humans in the replies where their judgment changes the outcome.

Build it this week

Start with the last 30 service conversations, transaction updates, client complaints, showing objections, and post-close questions. Mark each one as self-service, assisted draft, named-owner escalation, or stop-work. Then identify the evidence that would have helped the AI classify it correctly.

Next, create the minimum CRM fields: service lane, escalation reason, owner, due time, source timestamp, and allowed AI action. Add a simple policy that AI cannot send messages involving money, deadlines, legal language, complaint resolution, negotiation, safety, privacy, or client dissatisfaction unless the lane explicitly allows it.

Finally, review the ladder every week for the first month. Count how many cases AI resolved, how many it drafted, how many it escalated, and how many it should have escalated sooner. The last number is the one that matters most because it reveals where the business is asking automation to carry judgment it has not earned.

Before AI handles service replies, build the escalation ladder. Let automation be fast where the facts are safe, helpful where the facts need organizing, and quiet where the client needs a person.

Written by

Ben Laube

AI Implementation Strategist & Real Estate Tech Expert

Ben Laube helps real estate professionals and businesses harness the power of AI to scale operations, increase productivity, and build intelligent systems. With deep expertise in AI implementation, automation, and real estate technology, Ben delivers practical strategies that drive measurable results.

View full profile