Build a School Boundary Evidence File Before AI Answers Buyers

Build a School Boundary Evidence File Before AI Answers Buyers

School questions are back in the operational risk zone for real estate teams. On April 24, 2026, HUD said real estate professionals are not violating the Fair Housing Act merely by sharing school quality or crime data with prospective buyers when the information is provided consistently and without discriminatory intent. The same announcement pointed to a Dear Colleague letter from HUD's Office of Fair Housing and Equal Opportunity.

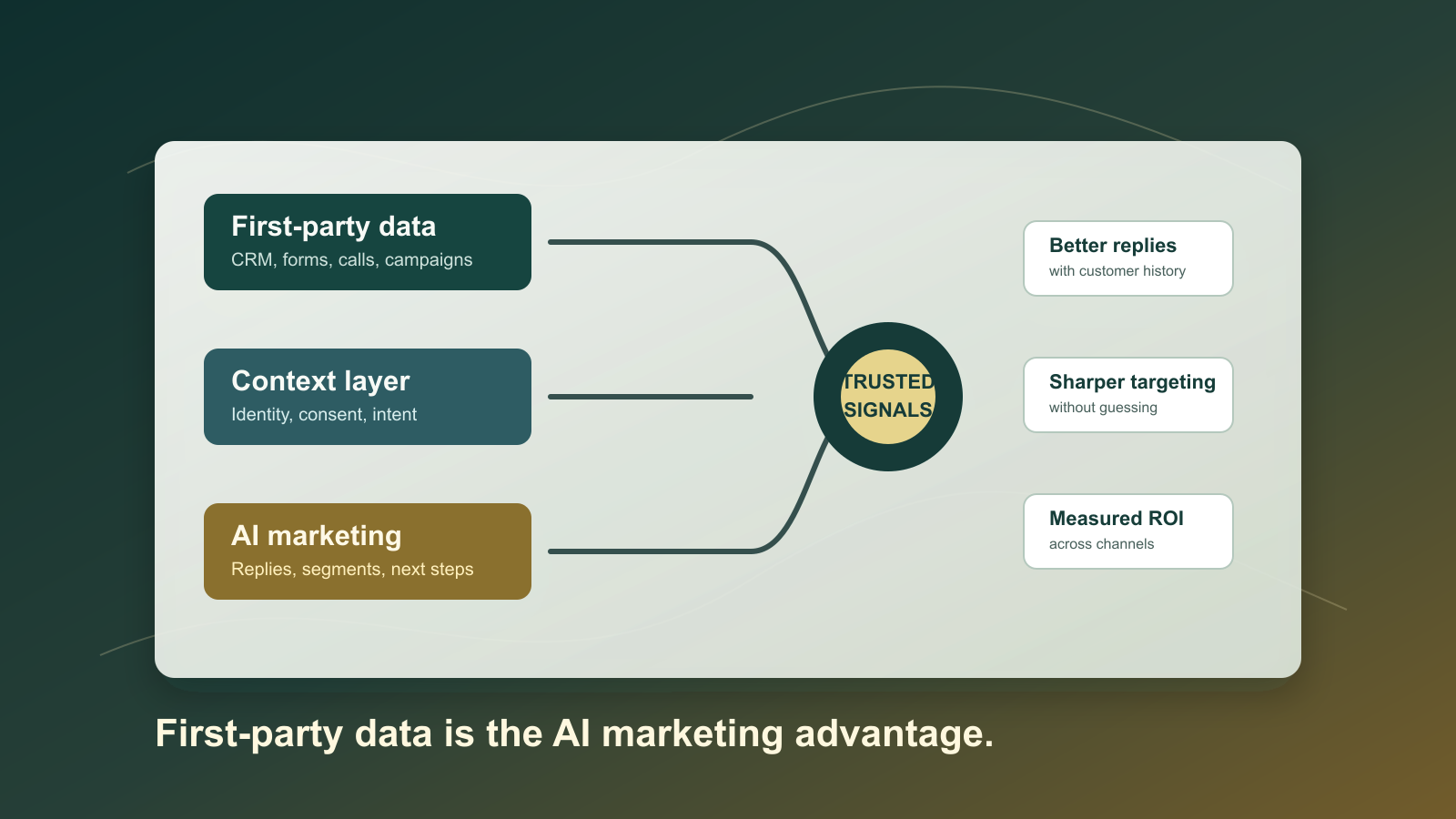

That does not mean an AI assistant should start improvising school advice from memory, search snippets, or an agent's opinion. It means the brokerage needs a cleaner source layer. If buyers ask about schools, the AI should draw from an evidence file that shows where the fact came from, when it was last checked, what geographic boundary was used, and where the human handoff begins.

NAR's 2025 Technology Survey found that AI-generated content is already part of the real estate workflow for many agents. That makes this less theoretical than it sounds. A buyer asks a normal question, the CRM has enough context to personalize the reply, and the assistant can produce confident language in seconds. Without source controls, the answer can blend school district boundaries, attendance zones, rating sites, agent opinion, and buyer preferences into one polished message that no one can audit later.

The right response is not to ban the topic. The right response is to separate objective data, client-provided preferences, and professional judgment before the AI is allowed to write.

The evidence file starts with geography

School data fails first at the boundary level. A property can sit inside one school district, but attendance rules may depend on grade span, program, capacity, transportation policy, magnet eligibility, open enrollment, or a district reassignment. A public map can be current at the district level while still being incomplete for the school a child may actually attend.

NCES's EDGE program is useful because it treats school districts as geographic entities and notes that district boundaries are updated annually from state education officials. That is a strong starting point for a brokerage data file. It gives the AI a formal source for district-level geography instead of letting it infer districts from listing copy or a consumer portal.

Attendance boundaries need even more caution. NCES's School Attendance Boundary Survey explains that its attendance boundary collection covers specific historic school years and does not define feeder patterns between school levels. That limitation is exactly the kind of note that belongs in the evidence file. If the AI can see the limitation, the answer can say what is known, what is not known, and what the buyer should verify directly with the school district.

A useful school evidence record should include:

- Property address or parcel reference.

- Source type, such as district boundary, attendance boundary, district locator, state education record, or client-provided link.

- Source URL and access date.

- School year or effective period.

- Grade span covered.

- Boundary confidence, such as confirmed, likely, conflicting, or stale.

- Required human follow-up when school assignment is material to the buyer.

This does not need to be complicated. It needs to be structured enough that the AI never treats a weak source as a confirmed answer.

Separate school facts from school opinions

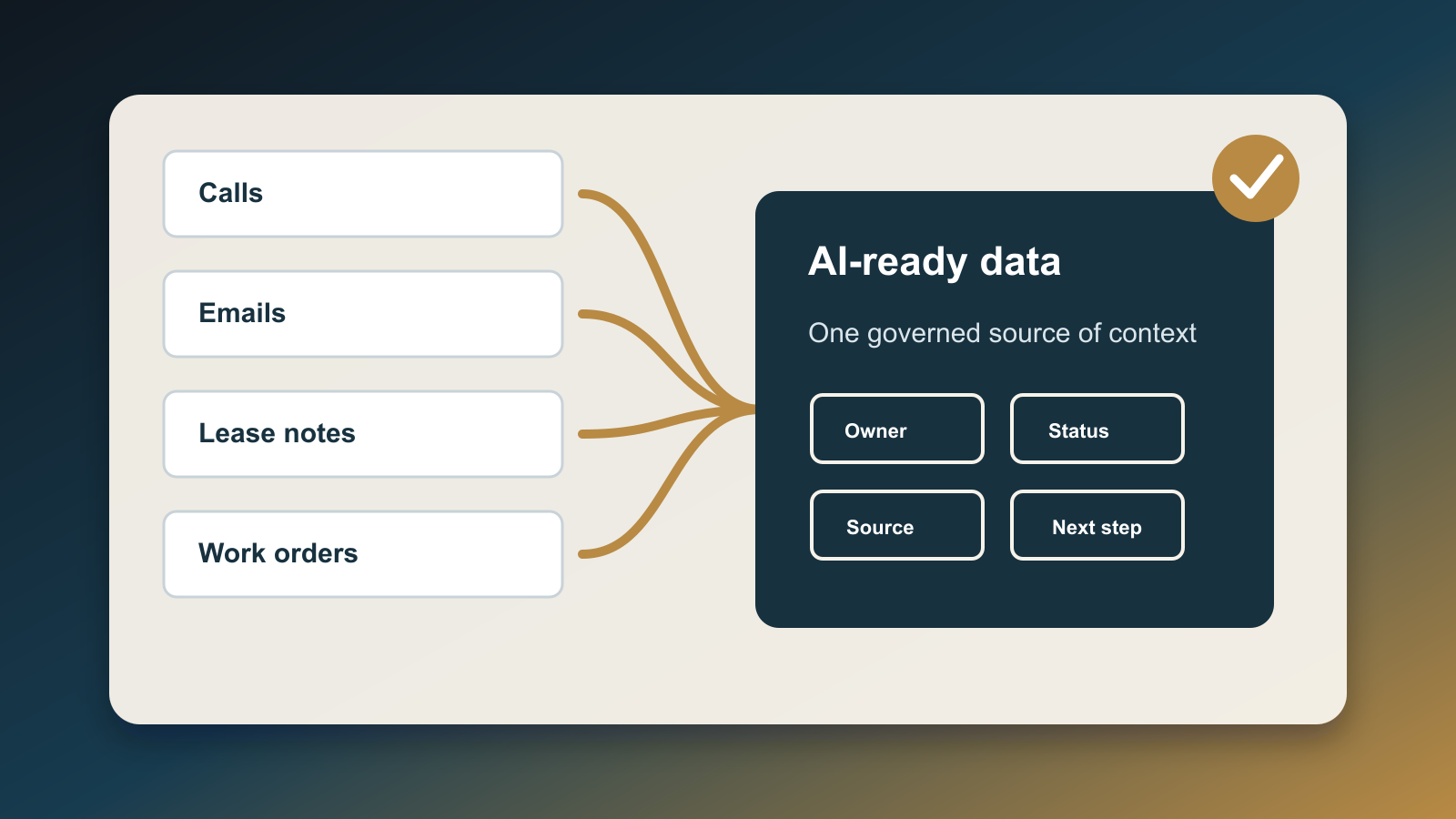

The operational mistake is putting every school-related item into one field called notes. A note that says "buyer wants strong schools" is not the same as a public school district boundary. A third-party rating is not the same as a district assignment. An agent's lived local knowledge is not the same as an official source.

Create separate CRM fields or objects for three categories:

- Objective records: district locator links, NCES or state education references, school district pages, attendance maps, and access dates.

- Buyer criteria: commute tolerance, program needs, school-year timing, grade level, transportation constraints, and any schools the buyer names.

- Restricted commentary: subjective school quality opinions, demographic shorthand, neighborhood desirability language, or unsupported claims.

The AI can use the first two categories with guardrails. The third category should trigger review or be excluded from buyer-facing drafts. That distinction lets the team respond helpfully without turning the assistant into a source of steering risk or inaccurate advice.

Add a response policy the AI can follow

A school-boundary evidence file is only useful if the AI has a response policy attached to it. The policy should be simple enough to execute automatically.

When a buyer asks about schools, the AI should first look for a current evidence record. If it finds one, the answer should cite the source type and recommend direct verification with the school district before a decision is made. If it finds conflicting records, stale data, missing grade-span coverage, or a subjective agent note, it should route the question to a human.

The answer should not rank neighborhoods, infer family status, make demographic assumptions, or turn school data into a recommendation that a buyer should or should not live somewhere. It can provide objective source links, summarize what the source appears to show, and ask whether the buyer wants help verifying details with the district.

That is the practical version of AI risk management. NIST's AI Risk Management Framework emphasizes managing risks to people, organizations, and society across AI use. In a real estate workflow, that means the assistant needs traceability, source freshness, and escalation paths before it writes about sensitive location factors.

Use the HUD change as a workflow update, not a content free-for-all

HUD's April 2026 communication is a reason to update brokerage training and AI prompts, not a reason to remove controls. The difference matters. A human agent may be able to discuss school quality data in a consistent, non-discriminatory way, but an AI assistant still needs a controlled source path because it can scale a flawed pattern across hundreds of conversations.

The workflow should be:

- Intake the buyer's question.

- Identify whether the question asks for objective data, subjective judgment, or a recommendation.

- Pull only approved school data sources from the evidence file.

- Show source date, school year, and confidence level in the internal draft.

- Require human review for conflicts, stale records, subjective claims, or buyer-specific advice.

- Send a response that points the buyer to official verification before relying on the information.

This keeps the team useful. Buyers get a real answer path instead of a vague refusal. Agents get a documented source trail. Brokers get a repeatable control that can be audited if a complaint or dispute appears later.

What to build this week

Start with one market area and make the evidence file small. Pull the official district locator links for the counties or districts you serve. Add NCES district boundary references where they help. Record which sources are district-level only and which ones claim attendance-level detail. Add an expiration date for each source so the AI cannot keep recycling old school-year data.

Then add three automation rules:

- If the school evidence record is current and source-backed, the AI can draft a factual response with source links and a verification disclaimer.

- If the evidence is missing, stale, conflicting, or grade-span limited, the AI creates a task for the agent instead of answering directly.

- If the draft includes subjective school quality language or neighborhood desirability language, the draft is blocked for review.

The goal is not to make AI the school expert. The goal is to make AI disciplined enough to support the buyer question without inventing certainty. School questions are too important to answer from vibes, and too common to handle with one-off manual scrambling. A source-backed evidence file gives the team a middle path: helpful, current, consistent, and reviewable.

Written by

Ben Laube

AI Implementation Strategist & Real Estate Tech Expert

Ben Laube helps real estate professionals and businesses harness the power of AI to scale operations, increase productivity, and build intelligent systems. With deep expertise in AI implementation, automation, and real estate technology, Ben delivers practical strategies that drive measurable results.

View full profile