Measure AI Agents by Work Completed, Not Prompts Run

Measure AI Agents by Work Completed, Not Prompts Run

AI adoption is no longer the hard part for many real estate teams. The hard part is knowing whether the work actually got better.

The easiest mistake is to measure the visible activity around AI: prompts written, tools purchased, drafts generated, summaries created, or hours that someone thinks were saved. Those numbers can make a team feel modern without proving that the business is more reliable. A lead can still go untouched. A CRM can still have duplicate records. A follow-up can still be late. A seller inquiry can still receive a generic response. An AI agent can be busy and still not move the business.

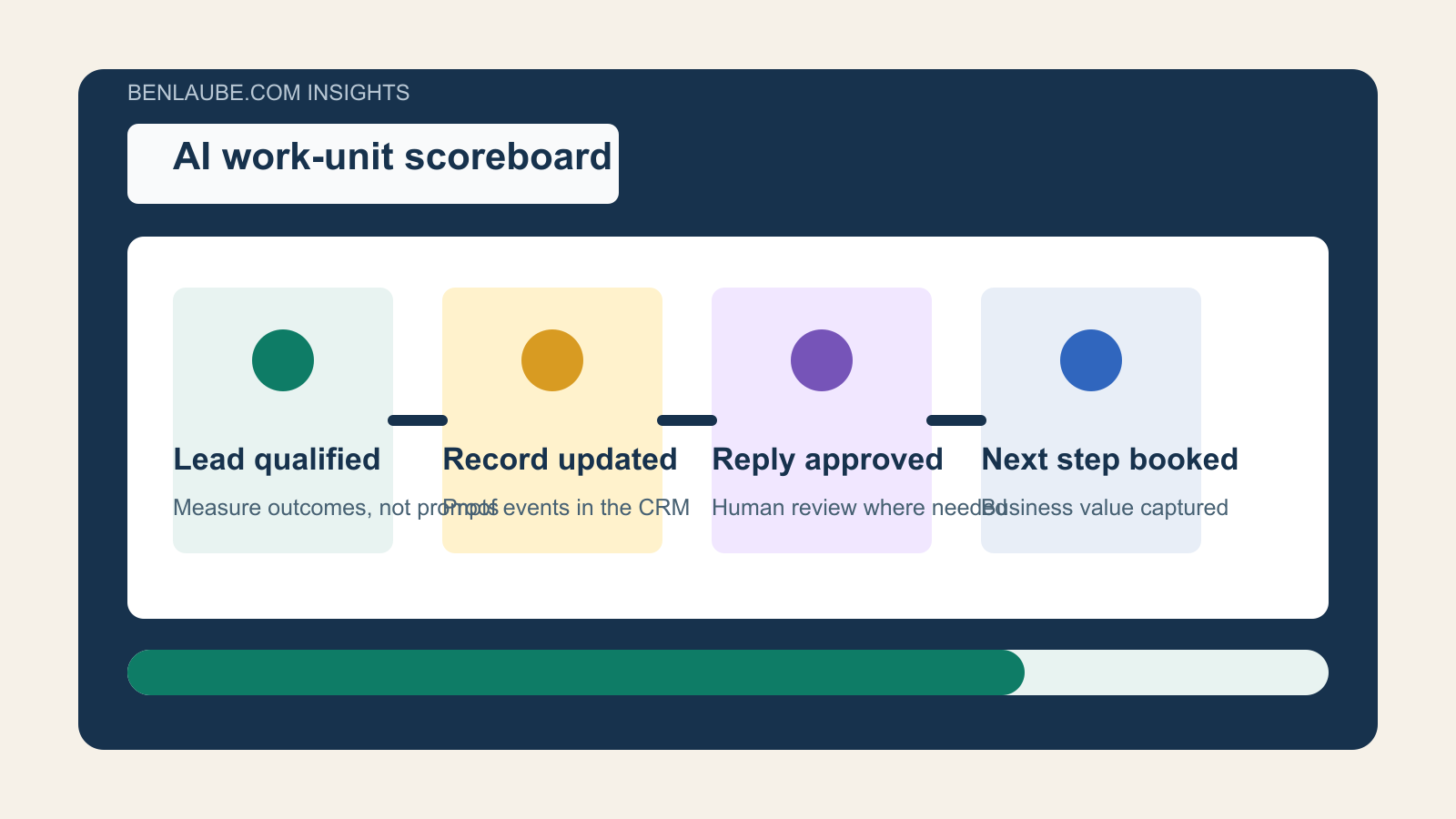

The better standard is a work-unit scoreboard.

A work unit is a completed business action with a proof event. It is not a model call. It is not a draft sitting in a sidebar. It is not an automation run that nobody checked. It is a concrete outcome such as a lead qualified, a missing field corrected, a listing inquiry routed, a reply approved, a CMA request assigned, a showing follow-up logged, an appointment booked, or a past-client referral placed into the right next step.

For real estate operators, this shift matters because AI is moving from novelty to infrastructure. Once AI starts touching lead response, CRM hygiene, listing marketing, client communications, and transaction follow-up, the question changes from "Can it generate something?" to "Can we prove it completed the right work?"

Why the metric needs to change

Salesforce made the language of work measurement more explicit in its fiscal 2026 results, introducing Agentic Work Units as a way to measure tasks accomplished by an AI agent. Salesforce reported 2.4 billion agentic work units delivered to date across Agentforce and Slack, alongside more than 19 trillion tokens processed.

That distinction is useful even for small operators who will never use the same enterprise metric. Tokens describe computation. Work units describe completed tasks. A real estate team does not need to know how many tokens were used to draft a response. It needs to know whether the right lead received the right response, whether the CRM record was updated, whether the appointment path opened, and whether the next owner is visible.

The broader data points in the same direction. Salesforce's 2026 State of Sales research found that 87% of sales organizations already use AI for tasks such as prospecting, forecasting, lead scoring, or email drafting. It also found that 54% of sellers have used agents, and nearly nine in ten plan to by 2027.

But adoption is not the same as operating leverage. The same Salesforce research found that 51% of sales leaders with AI say disconnected systems are slowing AI initiatives, while 74% of sales professionals are focusing on data cleansing. High performers are especially aggressive about that work, with 79% prioritizing data hygiene compared with 54% of underperformers.

That is the practical lesson: if the data layer and proof layer are weak, AI activity becomes hard to trust.

Real estate has the same measurement gap

NAR's 2025 Technology Survey shows real estate agents are already using AI. NAR reported that 46% of agents who are REALTORS use AI-generated content, 20% use AI tools daily, and 22% use them weekly. At the same time, 46% said AI had no noticeable impact on their business.

That gap is the opportunity. It is not enough to say that an agent uses ChatGPT, a CRM assistant, an AI dialer, a listing-copy tool, or a voice receptionist. The operator needs a scoreboard that shows whether AI is removing friction from revenue-producing workflows.

For a brokerage, team, or solo agent, the scoreboard should live close to the CRM and the customer journey. It should answer questions like:

- How many new leads did AI enrich with usable context?

- How many duplicates did the system flag or merge for review?

- How many first responses were drafted, approved, and sent inside the service-level target?

- How many seller valuation requests moved to a booked consultation?

- How many buyer inquiries received a property-specific next step?

- How many stale opportunities were reactivated with a human-approved message?

- How many client promises were logged as completed tasks?

- How many exceptions required human review?

Those questions are more useful than "How many prompts did the team run this week?"

Build the scoreboard around proof events

The best AI scoreboard starts with proof events, not dashboards.

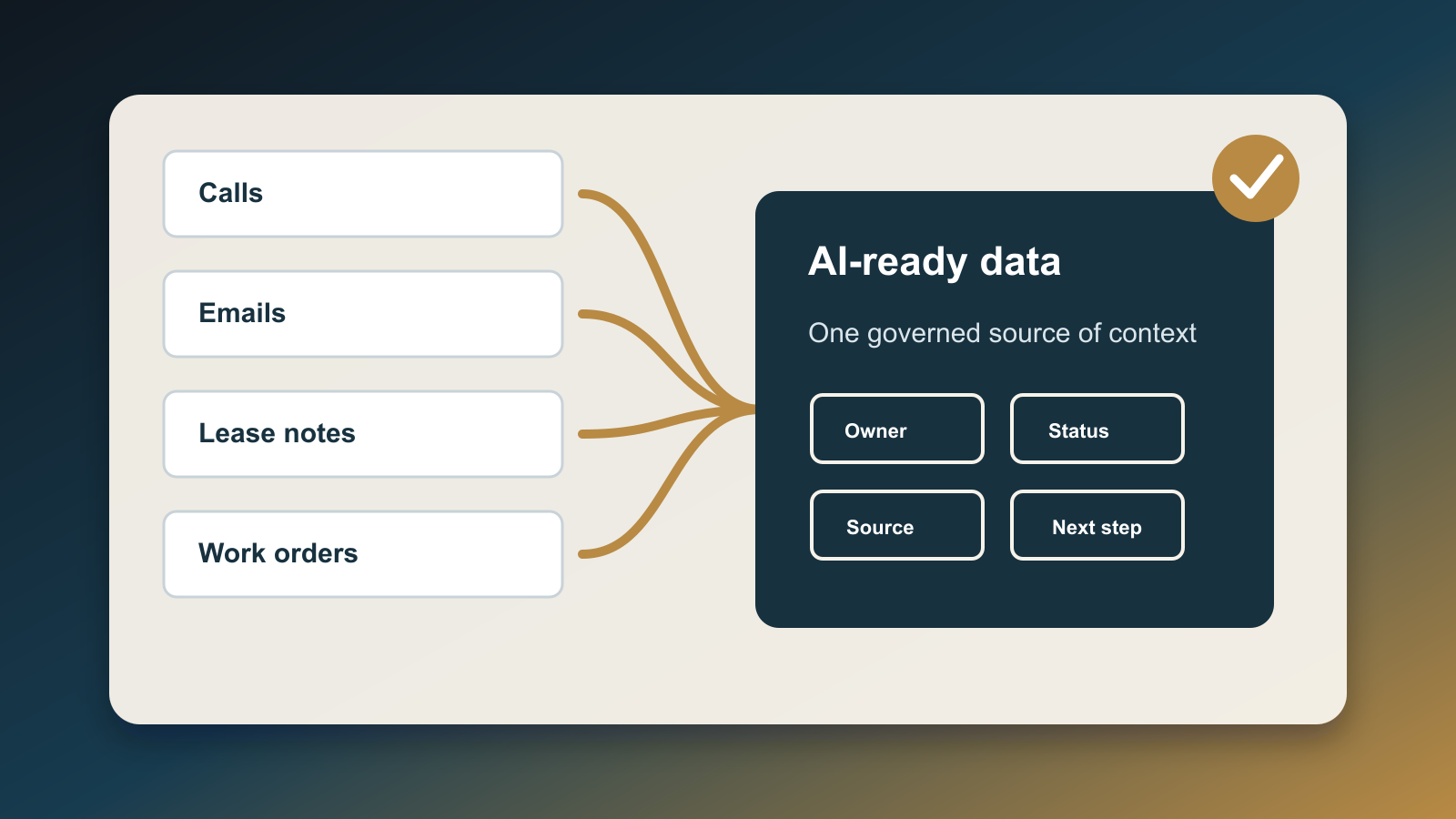

A proof event is the logged evidence that something happened. In a real estate CRM, that could be a call disposition, SMS sent, appointment booked, showing feedback recorded, lender intro made, valuation delivered, nurture sequence selected, or next task scheduled. In a marketing system, it could be a campaign variation approved, content updated, review response queued, or landing-page lead source captured.

Each proof event should include five fields.

1. Workflow

Name the business process: internet buyer lead, seller valuation request, open-house follow-up, past-client referral, listing inquiry, transaction milestone, post-close review request, or expired nurture sequence.

2. Work unit

Define the completed action. Avoid vague labels like "AI helped." Use concrete labels such as "lead enriched," "missing timeline requested," "duplicate flagged," "first response sent," "appointment booked," "seller packet prepared," or "follow-up task completed."

3. Owner

Show whether the action was completed by a person, an automation, an AI agent, or a human-reviewed AI workflow. This prevents accountability from disappearing into the tool.

4. Outcome

Record the result: accepted, edited, rejected, escalated, booked, no response, bad data, not ready, duplicate, or needs review. This is where the team learns whether AI is improving judgment or just increasing volume.

5. Business link

Tie the action back to a contact, property, opportunity, appointment, listing, transaction, or campaign. A work unit without a business object is hard to audit later.

This structure gives a small team the same discipline that large companies are trying to build: AI tied to workflow outcomes, not scattered experimentation.

What to track first

Do not start with every workflow. Pick one where missed work is expensive and visible.

For most real estate teams, the strongest first scoreboard is new lead response. It is frequent, measurable, and directly tied to revenue.

Track five work units:

- Lead captured with source and intent.

- Contact record created or matched without duplication.

- First response drafted with the correct property, timeline, and source context.

- Human review completed when confidence is low or the message touches sensitive topics.

- Next step logged: call attempted, appointment booked, nurture started, bad lead, no response, or reassigned.

This gives the operator a weekly view of the actual customer journey. If lead volume is high but first responses are slow, the problem is capacity. If drafts are fast but many are edited, the problem is context or prompt design. If responses go out but next steps are not logged, the problem is follow-through. If duplicates are common, the problem is data hygiene.

The scoreboard turns "AI is working" into a set of operational facts.

Marketing teams need the same discipline

The same idea applies to marketing automation. HubSpot's 2026 State of Marketing report says AI is reshaping how teams find and engage customers, and it lists AI-powered personalization, automation, brand-value content, AI search adaptation, and content repurposing among the top marketing trends. It also notes that marketers still face challenges with burnout, proving ROI, and platform changes.

That is exactly why a work-unit lens helps. A marketing team should not only count AI-generated posts or emails. It should track whether the work was approved, personalized to the correct audience, published in the right channel, tied to a campaign, and connected to a conversion path.

For a real estate business, useful marketing work units might include:

- Neighborhood page updated with fresh market context.

- Listing description drafted and reviewed for compliance.

- Review response prepared and approved.

- Past-client email personalized by segment.

- Blog post refreshed for AI search and internal links.

- Lead magnet form tested and source attribution verified.

Again, the point is not more AI output. The point is more accountable work.

The operator standard

McKinsey's 2025 State of AI survey found that most organizations are still experimenting or piloting rather than scaling AI across the enterprise. It also found that only 39% report EBIT impact at the enterprise level, even though 64% say AI is enabling innovation. One key finding was that high performers redesign workflows.

That is the standard real estate teams should borrow. AI becomes valuable when it is attached to a redesigned workflow with clear ownership, clean data, and visible proof.

The practical rule is simple: do not deploy an AI agent unless you can name the work units it is allowed to complete and the proof events it must leave behind.

Start small. Pick one workflow. Define the work units. Decide which ones can be fully automated, which ones require human approval, and which ones should only produce recommendations. Add a weekly scoreboard that shows volume, completion rate, edit rate, exception rate, and revenue-linked outcomes.

When the scoreboard improves, expand the system. When it does not, fix the workflow before buying another tool.

AI will keep getting cheaper, faster, and more capable. That makes measurement more important, not less. The teams that win will not be the ones with the most prompts. They will be the ones that can prove more of the right work got done.

Sources

- Salesforce, "Salesforce Delivers Record Fourth Quarter Fiscal 2026 Results" (February 25, 2026): https://investor.salesforce.com/news/news-details/2026/Salesforce-Delivers-Record-Fourth-Quarter-Fiscal-2026-Results/

- Salesforce, "The Productivity Gap: New Survey Shows 9 in 10 Sellers Are Betting on AI and Agents To Help" (February 3, 2026): https://www.salesforce.com/news/stories/state-of-sales-report-announcement-2026/

- National Association of REALTORS, "REALTORS Embrace AI, Digital Tools to Enhance Client Service, NAR Survey Finds" (September 18, 2025): https://www.nar.realtor/newsroom/realtors-embrace-ai-digital-tools-to-enhance-client-service-nar-survey-finds

- HubSpot, "2026 state of marketing: Data from 1,500+ global marketers" (updated April 10, 2026): https://blog.hubspot.com/marketing/hubspot-blog-marketing-industry-trends-report

- McKinsey, "The state of AI in 2025: Agents, innovation, and transformation" (November 5, 2025): https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai/

Written by

Ben Laube

AI Implementation Strategist & Real Estate Tech Expert

Ben Laube helps real estate professionals and businesses harness the power of AI to scale operations, increase productivity, and build intelligent systems. With deep expertise in AI implementation, automation, and real estate technology, Ben delivers practical strategies that drive measurable results.

View full profile